Plagiarism is a serious issue that can harm your reputation and negatively impact your business. Understanding how to prevent plagiarism is something content creators, marketers, and business owners should care about, as it undermines the hard work that goes into producing high-quality, unique content.

As a content creator, it can be disheartening to see your work being plagiarized by others. And if you use a professional content service like ours to write high-quality, unique content for you, then you will definitely want to protect your investment from plagiarism.

When it comes to preventing plagiarism it’s important to take a proactive approach and use a combination of on-site and online tools.

One of the most effective ways to protect yourself from plagiarism is to use plagiarism detection tools. These tools scan the internet for instances of plagiarism and can alert you if your content is being used without your permission. Some popular plagiarism detection tools include Copyscape, Grammarly, and Quetext. I will talk more about those later.

Another way to prevent plagiarism is to include a copyright notice on your work. This notice should include your name, the date of publication, and the words “All rights reserved.” This notice makes it clear that your work is protected by copyright law and cannot be used without your permission.

You can also protect yourself from plagiarism by being aware of how your work is being shared and used online and making sure that it is properly credited. You should be mindful of the platforms and websites you use to share your work and the potential risks of plagiarism on those platforms.

There are also some technical solutions to prevent plagiarism (at its root) on your website. So let’s start with those.

On-site methods

- Inserting an RSS footer on each new item within a feed is an effective way to deter content scraping bots.

- Disabling the ability to copy and paste text from web pages can also help to prevent plagiarism.

RSS Footer

An RSS (Really Simple Syndication) footer is a small piece of text that is added to the end of each item within an RSS feed. This text can include information such as the source of the content, the date it was published, and a link to the original source. Adding an RSS footer to each new item in a feed can help protect against scraping bots, which are automated scripts that crawl the internet and collect content from websites without permission.

When a scraping bot encounters an RSS feed with an RSS footer, it will collect the entire item, including the footer. This means that if the scraped content is later republished on another website, the footer will be included, providing a clear indication of the original source and making it easier to identify plagiarism. Additionally, the RSS footer can include a link to the original source, which makes it easier for readers to find and access the original content by providing a clear indication of the original source and making it easier to identify and prevent plagiarism.

Disabling copy text

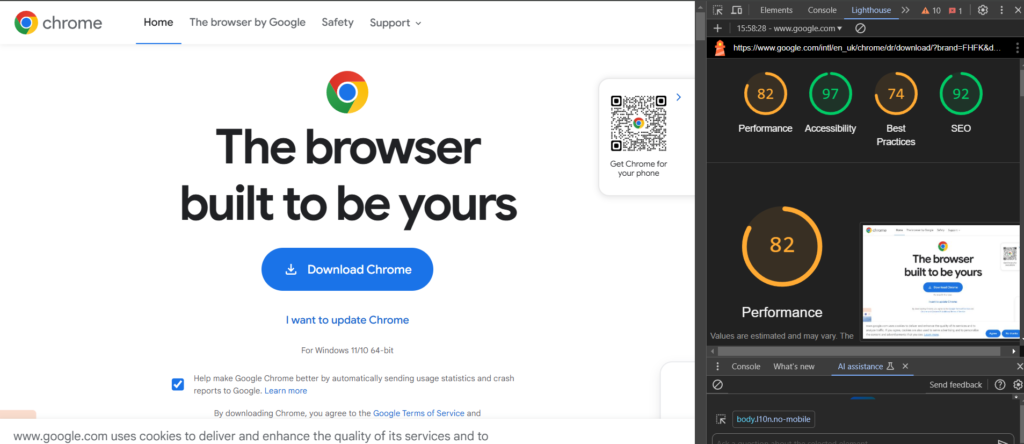

Javascript

One way to physically prevent users from being able to copy the text from your web pages is by using JavaScript to disable the right-click menu. This will prevent visitors from being able to copy and paste text by right-clicking on the page. However, it’s important to note that this method can also prevent legitimate uses of right-click, such as saving images or opening links in new tabs.

CSS Property

Another way to disable the ability to copy and paste text is by using CSS to make the text unselectable. This can be done by applying the CSS property “user-select: none” to the text on the page. This method is less intrusive than disabling the right-click menu, but it still prevents visitors from being able to select and copy text which can help prevent plagiarism.

Online tools:

There are a variety of plagiarism detection tools available online, which can be used to check your content for plagiarism before it is published. Some popular options include:

Copyscape

Copyscape is a plagiarism detection service that allows users to check if content has been copied or published elsewhere on the internet. It works by scanning the web for pages that contain identical or similar text. The service is typically used by writers, bloggers, and content creators to prevent plagiarism by ensuring their work is original and not being used without their consent. A trusted tool with a good detection rate, some core features are free.

Scribbr

Scribbr provides editing and proofreading services for academic documents, essays, dissertations, and theses. But they also offer a plagiarism checker tool to help ensure that the work is original. It has a well-designed user interface and a good detection rate, that scans more than just websites which really helps to prevent plagiarism.

Grammarly

Known for grammar and spelling checks, Grammarly also has great plagiarism detection features. The plagiarism detection tool compares the text in a document to billions of web pages and publications to check for potential plagiarism. It can also check for matching text within a user’s previous documents. This can be useful for students, educators, and professionals to ensure that their work is original and properly cited.

Duplichecker

Duplichecker provides plagiarism detection by allowing users to upload documents or paste text into a text box. The service compares the submitted text to billions of web pages and other documents to detect any potential plagiarism. It can be useful for checking the originality of your writing and finding any unreferenced sources. Duplichecker seems to perform as a reliable tool for preventing plagiarism with a solid detection rate.

Quetext

Quetext provides plagiarism detection using advanced algorithms to check text against billions of web pages and other sources to detect potential plagiarism. It provides a detailed plagiarism report that highlights any matching text, as well as the sources it was found in. You can upload multiple files at once and can be used for various types of documents, including academic papers, articles, and blog posts.

Worth Noting

It is worth noting that while these tools can be very helpful in detecting plagiarism, they are not foolproof and it’s always a good idea to manually review the content yourself as well as implement additional measures to prevent plagiarism. Additionally, it is important to stay up to date with the latest technologies and methodologies used by plagiarists to ensure that you are using the most effective tools.

And of course, recent developments in AI have raised concerns around low-quality uncredited content. Lots of AI tools scrape the web for examples and relevant content causing many to question how ethical it is, especially when it comes to AI generated Images. With written content it is a bit like researching online before you write – but it can be done in seconds – Check out this article for ways you can spot AI generated content.